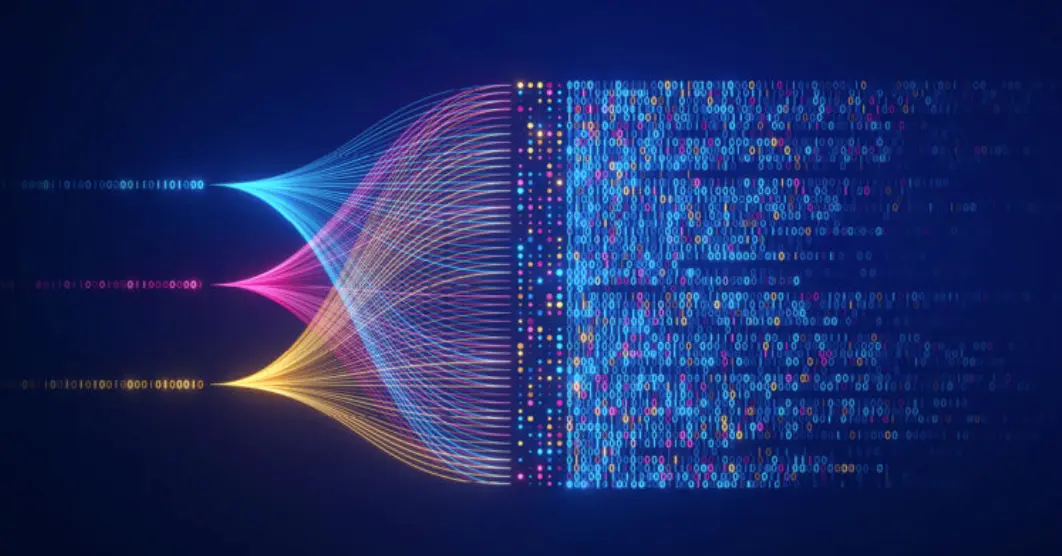

Understanding Tokenization in Natural Language Processing Tokenization is a fundamental step in Natural Language Processing (NLP) that involves breaking down text into smaller units...

-

Working: 8.00am - 5.00pm